Analysis: Digging Deeper into the Report Behind the Cuts

After an in-depth analysis of the APR Report, results led to more questions on how programs were chosen to be cut.

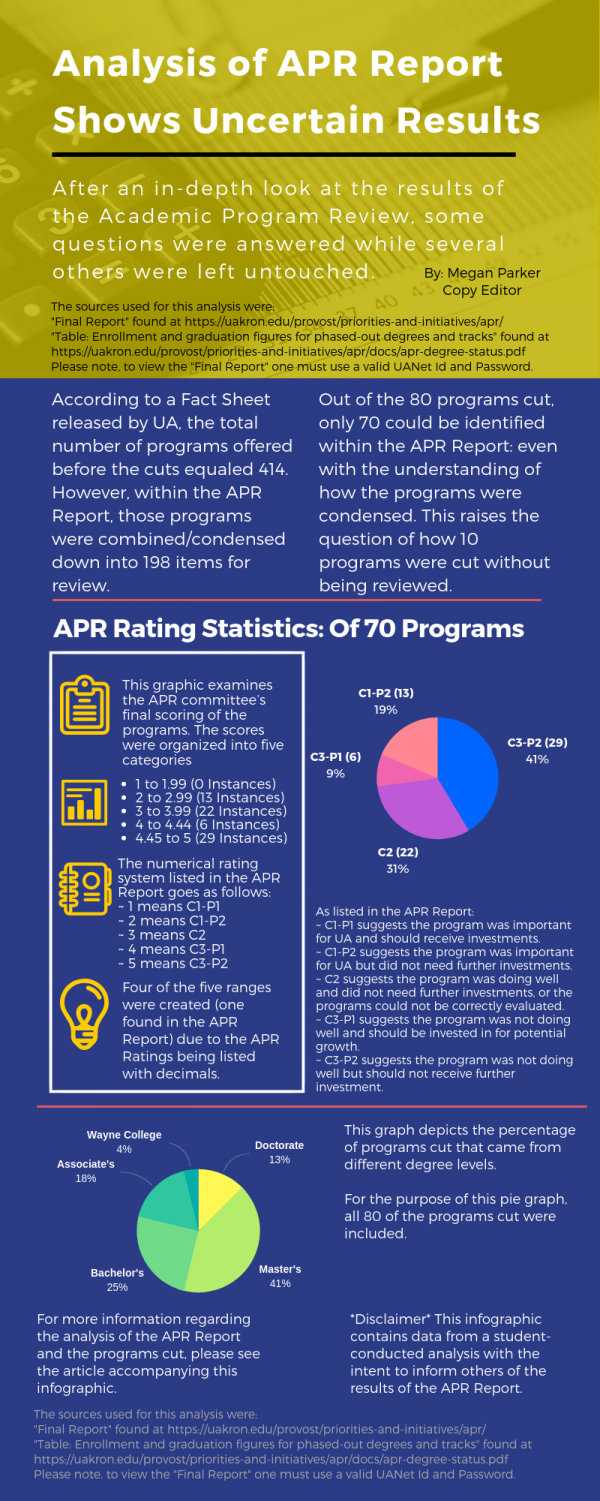

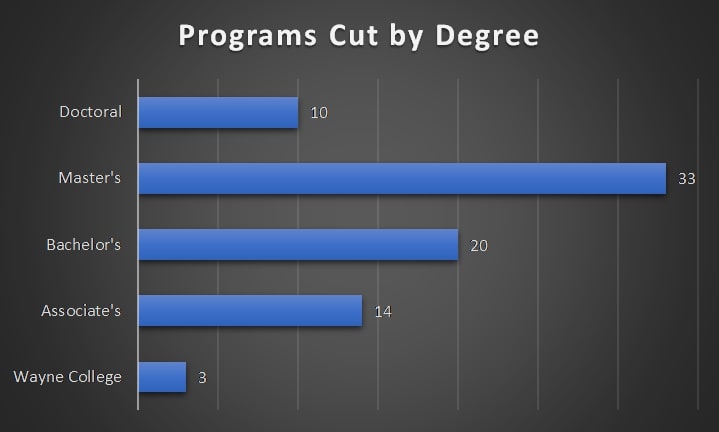

This graph shows that more Master’s and Bachelor’s programs were cut than any other degree type. For the purpose of this graph, all 80 programs cut were categorized.

September 17, 2018

This infographic provides a brief overview of the results from the analysis of the APR Report and the programs cut by UA.

Since The University of Akron stated plans to phase out 80 programs based on the results of a year-long Academic Program Review, several questions have been raised regarding the results of the APR and their correlation with the programs cut.

In attempts to answer those questions and bring attention to the true nature of how the APR Report was used in deciding which programs were to be cut, The Buchtelite conducted an objective analysis of both.

While the results of this student-led analysis are intended for informing the community of the actions taken by UA, it is important to note that confusion and questions remained present throughout the duration of the analysis.

Results of the Analysis of the APR Report and Programs Cut

According to a Fact Sheet Released by UA, the total number of programs offered before the cuts equaled 414. This number is different than the number of programs listed on the Academic Program Review Results page.

However, due to the extent of effort that would have been needed to review all of those, the programs were condensed into a list of 198 items for the Academic Program Review Executive Committee to review.

The greatest number of programs cut came from Master’s degrees and tracks, totaling 33. Next, 20 Bachelor’s degrees and tracks were cut. Then, 14 Associate degrees and tracks; plus three offered at Wayne College. Lastly, 10 Doctoral degrees and tracks were cut.

Since the total number of programs was condensed for the APR, not every program was reviewed on its individual merits and some programs were not even reviewed at all.

Of the 80 programs listed to be phased out by UA, only 70 could be identified within the APR Report. This raises the question of how 10 programs were chosen to be cut if they were not reviewed by the APR Review Faculty Team.

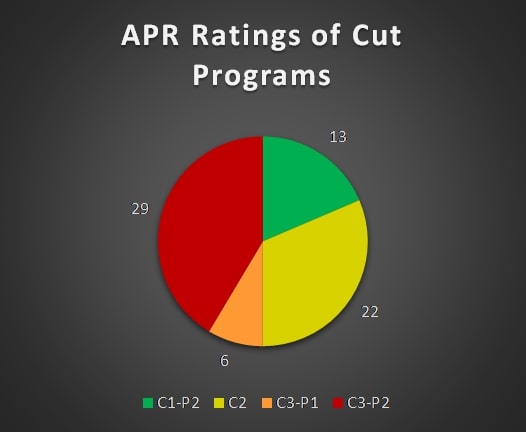

The process of rating a program had been as follows: the Academic Program Review Executive Committee would use the system of C1-P1, C1-P2, C2, C3-P1 and C3-P2 to rate programs.

According to the final APR Report; which requires a valid UANet ID and password to view, those categories were to identify which programs were doing well or not and which should receive further investments or not.

C1 meant “ the program was distinctive and important for UA. Here, Priority P1 meant that there is a need for additional resources in the program and that such investment could potentially yield tactical benefits to UA. Priority P2 meant that the program was doing well and could continue to thrive on its current resources.”

C2 meant “either (a) the program was solid and doing well and did not need additional resources at this time, or (b) the information in the self-study reports and Dean reports were inadequate to evaluate and the program and the program could sink or swim on its own merits.”

C3 meant “the program performance was not as expected for one or more reasons. These programs need attention and further, more detailed, review. Here Priority P1 meant that it is important to consider investing in these programs because it can result in tactical or strategic benefits to UA and the region. Priority P2 meant that no investment is recommended.”

The above graph shows that 41 percent of the programs cut and listed in the APR Report received the lowest rating from the APR Review Faculty Team. However, 50 percent of the programs cut received higher ratings.

Within the APR Report, those categories responded to a number in which was later used to rank the programs. The numerical categories were:

- C1-P1 = 1

- C1-P2 = 2

- C2 = 3

- C3-P1 = 4

- C3-P2 = 5

Since the Academic Program Review Executive Committee had been comprised of 24 members, the ratings are listed with decimals to show the average composite rating for the APR.

For the purpose of this analysis, the ranges used to categorize the programs based on their rating were:

- 1 to 1.99

- 2 to 2.99

- 3 to 3.99

- 4 to 4.44

- 4.45 to 5

In the APR Report, items receiving a rating between 4.45 and 5 were highlighted as recommendations to be cut. Of the 198 items listed, 39 fell into the category of cut recommendations.

However, only 29 of those recommended items were chosen to be cut by UA, leaving 10 of the low-rated items to continue. This means that 41 programs cut (of the 70 identified in the report) received higher ratings and were not recommended to be cut.

Some of the programs cut that received high ratings in the APR Report include a doctorate in nursing, a master’s in economics, a bachelor’s in French, a bachelor’s in mathematics and an associate’s in allied health technology: radiologic technology.

Now the question of how 10 low-rated programs were left to continue while higher rated programs were cut, is left unanswered. Another question raised from these results is since those programs received high ratings, what was the basis in the APR Report to cut them?

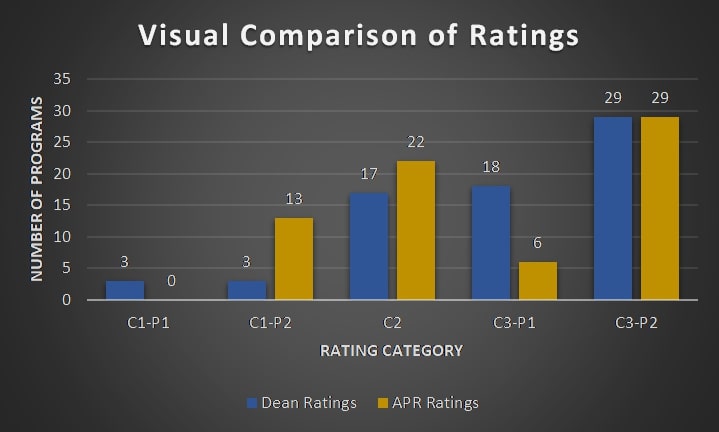

The above graph provides a side-by-side comparison of how the Deans and the APR Review Faculty Team rated the programs cut and listed in the APR Report.

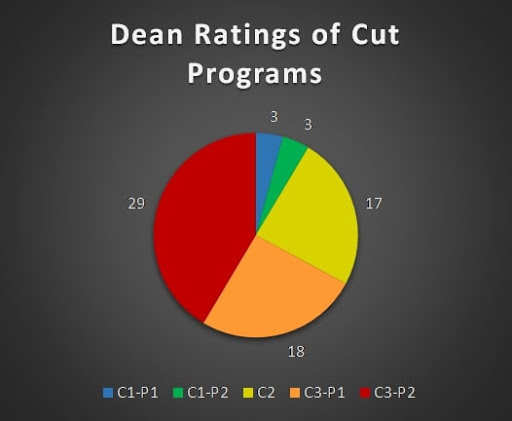

Another conflicting result of the analysis found that three of the programs cut received the highest possible rating by the Dean of that program. These programs were a doctorate in adult development and aging, a doctorate in sociology and an associate in paraprofessional education.

The above graph shows that 41 percent of the programs cut and listed in the APR Report received the lowest rating from Deans. However, three programs were cut that received the highest rating from Deans.

Why were high-priority programs chosen to be cut instead of being invested in by UA?

One last interesting note from this analysis is if UA states that these cuts were made based on the results of the APR Report, why were the ratings not already listed with the cut programs in the document handed out during the Aug. 15 Board of Trustees meeting?

Is it possible that UA believed releasing the program ratings with the cuts in an easy-to-understand matter would have been a negative action? Or were these cuts pre-planned and the APR Report is being used as a scapegoat?

Richard Elliott • Sep 19, 2018 at 4:57 PM

Thank you for a well researched, factual piece of journalism. So-called journalists at the Akron Beacon Journal and Plain Dealer should read this article to learn what journalism is supposed to be, as opposed to “phoning in” whatever party line the BOT puts out.